A global industrial company with 22,000 employees across 31 countries closed a European acquisition in January. Sixty days later, the CHRO asked L&D how fast every new hire could clear the combined safety curriculum in their own language, on budget. The old approach was 340 hours of English-default video, handed to three LSPs, quoted at eighteen months and $3.8 million. That is not a plan; it is the gap between what boards now expect from global L&D and what traditional localization workflows can deliver.

Table of contents

When It Is Actually Time to Localize

Most programs fail at the start because they start for the wrong reason. A regional head asks for Portuguese onboarding, an auditor flags a gap, a product launch wants regional demos, and within weeks, L&D has a backlog with no priority logic.

Four triggers justify a formal program. First, a step-change in global hiring when the workforce shifts from 70% concentrated in one language to 40% distributed across three or more, non-English hires take 20% to 40% longer to reach full productivity, and that shows up in ramp dashboards before anyone calls it a localization problem. Second, a merger or acquisition; integrations are the highest-ROI moment because every day of lag between close and competent operation is measurable economic loss. Third, a compliance mandate, regulators in the EU, Brazil, India, Japan, and elsewhere increasingly require mandatory training in the language the worker understands, and the liability for English-only safety content is no longer theoretical. Fourth, a regional engagement signal: when a region shows completion rates 15+ points below the global average, the data is already making the case.

If none of the four is present, a targeted subtitle rollout on high-priority content is usually the right move.

The Four-Tier Prioritization Framework

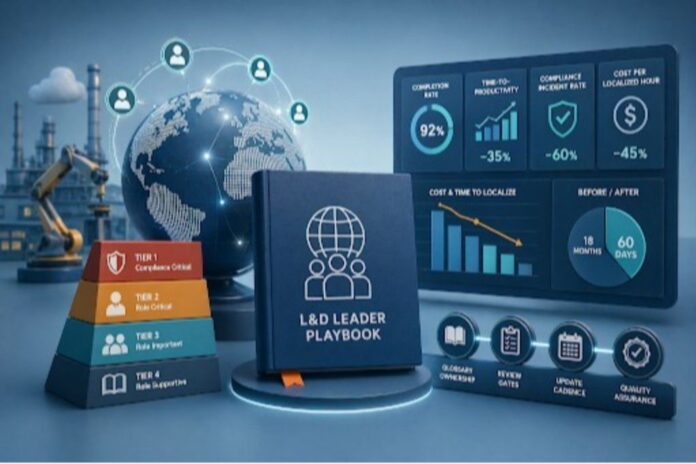

Once localization is on the agenda, the consequential decision is what to localize first. The wrong instinct is to translate whatever the loudest stakeholder asks for. A stronger approach ranks content by legal risk, role criticality, and strategic value. Tiers are sequenced: Tier 1 finishes before Tier 2 starts, and nothing in Tier 4 ships until the first three tiers cover the active workforce.

- Tier 1: Mandatory compliance: Any module a regulator could demand evidence of comprehension for manufacturing safety, HIPAA, anti-bribery, code of conduct, GDPR, and sector-specific certifications. If the worker cannot do their job legally without it, it is Tier 1. Full dubbed audio in every language spoken by at least 5% of the workforce in that role. Subtitle-only is not defensible here.

- Tier 2: Onboarding and role-specific skills: Everything a new hire must absorb to hit baseline productivity, plus technical skill training. This tier drives time-to-productivity directly. Rule of thumb: every week of onboarding delay for a non-English hire costs 1% to 2% of the first-year salary in lost output. For a 10,000-person enterprise with 1,500 annual hires across six languages, Tier 2 often pays for the entire program in year one.

- Tier 3: Leadership, culture, and strategic communication: Executive town halls, CEO updates, leadership development, values content, and change management. Retention of the message and alignment with stated strategy drop sharply when international workers watch a subtitled leadership video versus a dubbed one in their primary language. The payoff shows up in engagement scores and in whether the parent company is perceived to show up for its international workforce.

- Tier 4: Optional and elective: Soft skills, wellness, and book summaries. Subtitle-only, or English-only with clear opt-in. Full dubbing of Tier 4 is almost always a budget misallocation.

The discipline the model enforces is that no Tier 2 content is localized while Tier 1 gaps exist, and no Tier 3 request jumps ahead of Tier 2 coverage. When stakeholders push back, L&D points to a framework rather than a queue that rewards internal politics. A secondary benefit: the tiers tell procurement what to buy. Tier 1 needs human-in-the-loop review; Tier 2 justifies an AI-heavy workflow with spot review; Tiers 3 and 4 run on a full AI workflow with light QA. Collapsing those three cost profiles into a single procurement line produces either under-qualified compliance content or over-spent soft skills content.

Build, Buy, or Hybrid

Three operating models exist; none is correct in isolation.

- In-house translation teams give full control over quality and confidentiality b

- t run $200 to $400 per minute of finished content. They make sense for very high-sensitivity content in one or two languages, not as the primary engine for a library with more than five target languages.

- Traditional LSPs charge $75 to $250 per minute with a 4 to 12 week turnaround. They remain useful for highly regulated content where audit-ready translation memory and certified linguists are required, but do not scale to libraries of hundreds of videos across ten languages with quarterly refresh cycles.

- Pure AI platforms deliver end-to-end transcription, translation, voice cloning, dubbing, and lip-sync in under an hour at $20 to $75 per minute an 80% to 95% delta versus LSPs. Pure AI fits Tier 2, 3, and 4 but needs a human-in-the-loop layer for Tier 1.

- Hybrid with human-in-the-loop review is what most mature L&D functions are standardizing on in 2026. AI produces the first pass in minutes; a bilingual SME validates Tier 1 before publication; Tier 2 goes through spot-check review; Tiers 3 and 4 ship with automated QA. Total cost lands between $40 and $120 per minute, with a turnaround in days.

Build-versus-buy has effectively collapsed into a platform-and-review-model question: which AI platform is the production backbone, and what governance sits on top of it by tier. Platform selection at enterprise scale comes down to six capabilities: multi-speaker detection for instructor-plus-SME videos, a centrally managed translation dictionary for brand and compliance terminology, voice cloning so recurring instructors remain recognizable, API integration with the LMS stack, SOC 2 Type II and GDPR posture, and a workflow layer that supports reviewer roles and approval gates. Not every platform covers all six. A purpose-built enterprise L&D localization platform consolidates these into a single production path, which is what makes hybrid governance practical rather than aspirational. Teams that have made the shift report 3x to 5x faster global launches and roughly 70% lower localization cost than the prior LSP baseline.

Useful heuristic: if the library produces more than 50 hours of new or updated content per year across four or more languages, a hybrid AI-primary model beats every alternative on cost, speed, and consistency, with break-even inside the first quarter.

Pilot, Then Scale

With over 75% of global learners and consumers preferring content in their native language, multilingual training is no longer optional—it is a performance and compliance requirement rather than a localization preference. This preference directly reinforces the need for structured, tiered localization strategies rather than ad hoc translation efforts.

The fastest way to kill a program is to scale it before validating it. A credible pilot is narrow and instrumented: one content type, two or three languages, one success metric committed in writing before the pilot starts. Not a full-onboarding curriculum in nine languages with vague “improved engagement” as the goal.

One content type is a safety onboarding sequence, an executive video series, and a product certification module that controls for subject-matter variables. Two or three languages, drawn from the top workforce concentrations plus one stretch language, surface the differences between high-resource languages (Spanish, Mandarin, German) and lower-resource ones (Vietnamese, Hindi, Tagalog). The most useful metrics are completion rate delta, assessment score delta, or SME-rated translation accuracy on a defined rubric. Teams that set “we’ll see how it goes” almost always declare victory regardless of what the data shows.

Instrument for attribution serves the localized version to a defined cohort and holds a matched cohort on the original, tracking completion, score, and time-to-competency across both. Without a control group, the lift could be anything from localization to seasonal variation. Stage the review workflow during the pilot, not after: the governance layer, not the platform, is what breaks first at scale. Close with a go/no-go decision inside 45 days.

Scale-out then tests governance, and the model a program ships with is the one it lives with for three years. Four elements matter most: named ownership of glossary and terminology per language with change control; voice and brand guidelines for any cloned instructors, including consent scope and retirement; written review gates by tier (mandatory SME review with audit log for Tier 1; 10% to 20% spot-check SLA for Tier 2; automated QA plus feedback channel for Tiers 3 and 4); and a continuous update cadence quarterly glossary reviews, annual content refresh, quarterly for regulatory. Over-invest in governance in the first two quarters. It feels slow relative to the speed AI enables, but it is what makes the economics sustainable.

Metrics the CFO Will Actually Read

Programs get defunded for two reasons: they cost more than expected, or they cannot prove they worked. Four metrics carry the weight, each with a clean pre-program baseline.

- Completion rate by language. Segment by primary language of the learner, not region or LMS instance. Most programs see a 20 to 40 point completion lift for previously underserved language groups within two quarters the most convincing data point for continued investment.

- Time-to-productivity by language cohort. The metric is tied most directly to hiring economics. Days of ramp reduction times, daily fully-loaded cost translates straight into dollars. A two-week ramp reduction for the non-English pipeline at a 10,000-person enterprise commonly produces seven-figure annualized savings.

- Compliance incident rate. For regulated industries, this is the political protection. Incidents attributable to training comprehension gaps should be tracked with a clear link back to the module involved. A program that can show a declining incident curve after rollout is functionally unkillable.

- Cost per localized hour, year over year. The efficiency metric. Programs moving from traditional LSP economics to hybrid AI typically see per-hour cost drop 70% to 85% over 12 to 18 months. Report it alongside output volume so the comparison is defensible.

Learner engagement scores and review cycle time belong in the operating dashboard as early-warning indicators, but the four above are what travel to the CFO and the CHRO. A tight quarterly report with these numbers and a two-paragraph narrative does more for program survival than any demo. CFOs read dashboards; they do not read hype.