Anthropic first introduced the Model Context Protocol (MCP) in November 2024 before launching it to the Linux Foundation’s Agentic AI Foundation in December 2025. Designed to connect AI models to data, the last 12 months have seen an explosion in the open-source standard that should allow AI models to connect securely with external tools, databases, and software, with an ever-growing number of companies announcing new integrations based on MCP.

Even some of the biggest names in AI, such as OpenAI, use MCP, and you’ve also got major tech firms like Stripe, Neo4j, and Cloudflare developing MCP servers to expose their services to AI agents.

That said, some organizations are battling to keep up with the protocol’s numerous security implications. Data from vulnerablemcp.info identifies 50 total vulnerabilities, including 13 critical severity issues and 24 tracked CVEs within the last year.

Read on for a comprehensive overview of MCP security and recommended approaches to adopt.

Key Takeaways

- The Model Context Protocol (MCP) connects AI models to external systems, offering a standardized communication method.

- While many companies, including OpenAI and Stripe, adopt MCP, organizations must tackle its associated security risks.

- Known vulnerabilities include SQL injection, credential exposure, and prompt injection that could harm systems.

- To secure MCP infrastructure, platforms like DataDome offer real-time protection against evolving threats.

- As enterprise software increasingly adopts agentic capabilities, managing MCP security will become crucial.

Table of contents

A Simple Explanation of Model Context Protocol

There are several relatively new AI implementations you might have heard of, agentic AI being one of the most trending, if you will, that MCP facilitates.

Essentially, MCP enables complex AI systems to interact with external systems to standardize communication between the two. Some of those external systems include databases, dev tools, online services, or server and computer files.

MCP uses a client-server architecture where:

- MCP Client runs alongside large language models (LLM) like Claude or Gemini.

- MCP Server runs alongside your tools, databases, and APIs.

- JSON-RPC 2.0 is the messenger between client and server via HTTP.

As you can imagine, allowing an open-source standard to communicate with sensitive systems is an inherent security risk, and the MCP tool running alongside the LLMs, requesting the tools through the server is a trust process that’s so easily broken if the tools/call message contains malicious parameters.

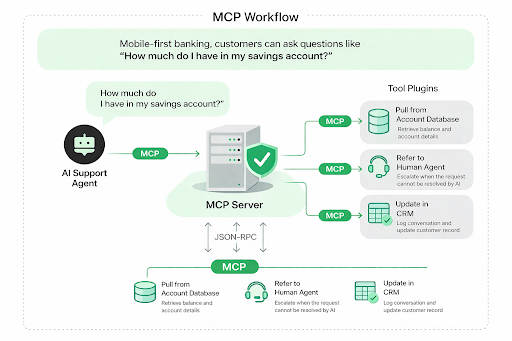

To contextualize it, we’ll use a financial institution as an example. In the era of mobile-first banking, customers can ask questions like “How much do I have in my savings account?” In response, the AI agent can ask for more details before retrieving the information from the customer’s account.

If they can’t manage the request, they’ll direct the customer ticket to a human agent and log the conversation in the CRM.

Where AI Meets Action: Inside the MCP Framework

Pulling the data, referring the information to a human agent, and logging the interaction in the CRM requires the AI agent to access external systems, and that’s what MCP does: it allows AI to interact with external systems to fulfill requests and outcomes.

[Image 1: A flowchart showing the MCP workflow for connecting AI with external systems.]

MCP is the standard/protocol (rules of communication), and the MCP server is the program that implements these rules to expose data (e.g., databases, APIs, files).

As you can see from the flowchart, the MCP server connection controls data retrieval from databases holding account balance information. When action is required, the MCP converts agent requests, such as the “check balance” in the flowchart, to API calls managed by external systems, such as account databases, through the MCP server.

Sometimes, MCP servers can also prompt people for extra information, and if you’ve interacted with an online AI helpbot, you’ll know this is common, which helps model better responses.

What’s interesting is that anyone can develop MCP servers, and we’re seeing it a lot with people developing advanced AI models to manage systems and processes through Anthropics Claude. If Claude, the AI model, supports the MCP, anyone who understands how to do it can create an MCP server.

Communication still flows through standard APIs like HTTP, so it’s not necessarily complex, and that’s why we’re seeing so many companies create their own MCP servers or buy into them through platforms like GitHub.

The Common Security Risks Associated With MCP

There are several well-documented security risks associated with MCPs. MCP inherits the entire attack surface of traditional APIs. Think SQL injection, command injection, path traversal, authentication bypass. That’s amplified because the risk is through the intelligence and adaptability of LLMs.

Unconfirmed third-party sources

There are hundreds, if not now thousands, of MCP servers on the internet, and anyone interested can download one. Naturally, malicious actors can so easily attack supply chains. The basis of MCP servers and transmitting data between external services is risky, but the fact that workflows are often set and forgotten about by developers of agentic tools makes it worse.

It is so easy for an MCP to impersonate an official integration with a cloud database.

Exposing credentials

MCP servers have direct access to external systems via connections that require credentials such as API keys. Typically, these servers run locally, but that credential storage can still be an exposure point. They have direct access permissions to multiple external services, so centralizing the storage of sensitive credentials is a must.

Prompt injection

We mentioned the prompts MCP servers can use to facilitate specific instructions, and they can be used with malicious intentions to cause the AI agent to behave as it shouldn’t. It is considered the #1 risk for generative AI applications. In relation to the flowchart and example we gave above, malicious prompt injection could cause AI agents to modify and corrupt financial databases.

Research states that 43% of assessed MCP servers contained injection flaws.

Recommended Approach to Adopt for MCP Security

MCP is a security risk, but the growing understanding of how MCP functions and the inherent security risks has led to advanced security solutions.

Use platforms like DataDome to secure the Model Context Protocol infrastructure

According to Cyber security times, Traditional security measures are struggling to manage the nature of AI agent interactions. Dedicated solutions, such as DataDome, are at the forefront of offering protection tailored to MCP security in these complex environments.

DataDome focuses on securing the AI agent attack surface, which is especially relevant in MCP workflows. They don’t simply monitor API traffic; they analyze the intent behind each request in real time.

Considering AI agents continuously interact with external systems such as databases, APIs, and CRMs, this type of monitoring is essential to determine whether a request is legitimate or potentially malicious.

DataDome can proactively block threats such as prompt injection, credential abuse, and other forms of automated attacks before they reach sensitive systems.

DataDome’s FastMCP security integration is designed to secure this exact layer. It embeds MCP architectures, allowing organizations to monitor and control how AI agents execute actions across connected systems. With minimal configuration required, developers can quickly add a layer of protection to workflows involving data retrieval, human escalation, or CRM updates without needing to overhaul existing infrastructure.

In addition to real-time protection, DataDome provides continuous monitoring and detailed logging of AI-driven interactions.

Conclusion

Model Context Protocol security is a massive issue, especially as businesses aren’t just using one; they’re potentially using hundreds. Soon, Gartner predicts that by 2028, 1/3 of enterprise software will contain agentic capabilities. All of them carry inherent security risks that need controlling as the reach of MCPs continues to grow.